Jimmy Chen’s Poster

How much do inappropriate responses impede clinicians’ engagement and therapeutic alliance with a virtual patient?

by Jimmy Pengyu Chen1,2, Jade Wei1,2, Shibani Datta2,3, Runqin Shi1,2,3, & Sarah Bloch-Elkouby2,3

1 Teachers College, Columbia University in the City of New York

2 Icahn School of Medicine at Mount Sinai in New York City

3 Ferkauf Graduate School of Psychology, Yeshiva University

Background:

Leveraging technology to augment and transform the process of psychotherapy has been a constant trend of efforts, from the use of telephone decades ago to the artificial intelligence (AI) today. The concerns have risen at the same time, cautioning that the use technology will lead to miscommunication due to missing non-verbal cues, inhibit inspirational thinking associated with self-discovery, and the quality of therapeutic alliance (Anthony, 2003). While these concerns remain valid and difficult to remedy, determining the impact of technological shortcomings becomes a vital index for the feasibility of such technology.

The virtual patient (VP) can be used as a pedagogic tool for diagnostic psychiatric interviews. As an emerging technology, it remained inferior to trained human actors in perceived authenticity, leading to lower diagnostic accuracy in medical history assessments (Fink et al., 2021). However, the technology offers scalability and consistency, which is difficult for human actors in a psychiatric setting. A study conducted in 2016 with preliminary VP technology recruited 36 participants to conduct an interview on a VP with depression symptoms (Dupuy et. Al, 2020). Although the VP received positive comments overall, it only offered predetermined options as conversational input and corresponding pre-recorded outputs.

The development of artificial intelligence provided an unprecedented opportunity that enables VPs to engage in free conversations. From rule- based chatbots before 2000s to neural network-based intent-matching around 2010s to transformer based-large language models currently, the evolution has significantly enhanced the flexibility and sophistication of conversational agents (Al-Amin, 2024).

Objective:

The current study seeks to investigate the feasibility of utilizing a VP in training new and future clinicians. Specifically, despite the sophistication of VP technology, it still produces a substantial number of mistakes that impede the flow of conversation, sabotage therapeutic alliance, and interfere with research implementation, measurements, and educational effectiveness of the simulated interaction. Aims

- Quantitatively measure the impact of technical errors in the conversation on therapeutic alliance perceived by the clinician

- Explore whether such impact is moderated by the race of VP.

Hypotheses

- The number of technical errors would be positively correlated with the rupture of therapeutic alliance and negatively corrected with perceived alliance.

- Interviewing with Black Noah will exacerbate the damage of technical errors on therapeutic alliance.

Method:

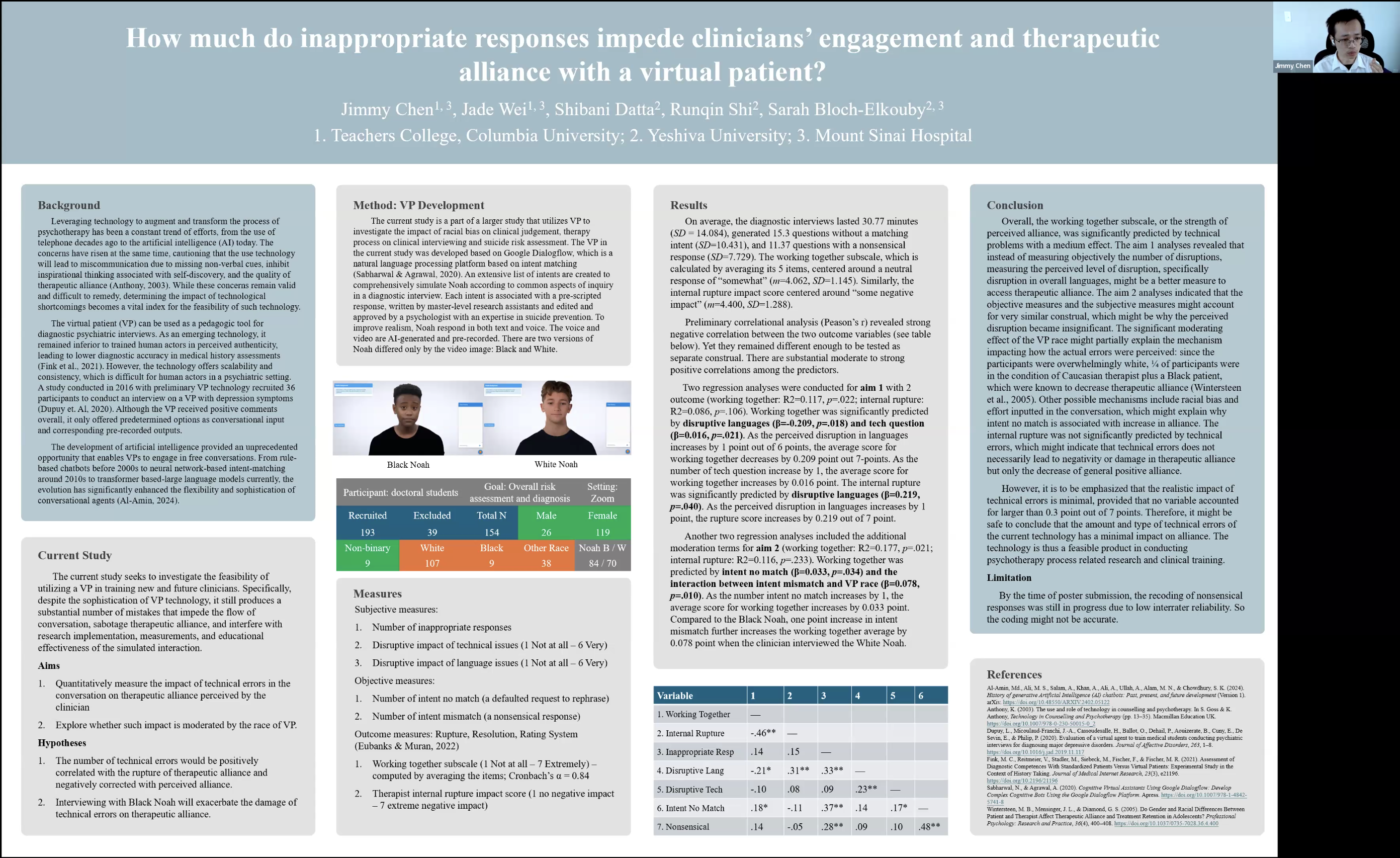

The current study is a part of a larger study that utilizes VP to investigate the impact of racial bias on clinical judgement, therapy process on clinical interviewing and suicide risk assessment. The VP in the current study was developed based on Google Dialogflow, which is a natural language processing platform based on intent matching (Sabharwal & Agrawal, 2020). An extensive list of intents are created to comprehensively simulate Noah according to common aspects of inquiry in a diagnostic interview. Each intent is associated with a pre-scripted response, written by master-level research assistants and edited and approved by a psychologist with an expertise in suicide prevention. To improve realism, Noah respond in both text and voice. The voice and video are AI-generated and pre-recorded. There are two versions of Noah differed only by the video image: Black and White.

Results:

On average, the diagnostic interviews lasted 30.77 minutes (SD = 14.084), generated 15.3 questions without a matching intent (SD=10.431), and 11.37 questions with a nonsensical response (SD=7.729). The working together subscale, which is calculated by averaging its 5 items, centered around a neutral response of “somewhat” (m=4.062, SD=1.145). Similarly, the internal rupture impact score centered around “some negative impact” (m=4.400, SD=1.288).

Preliminary correlational analysis (Peason’s r) revealed strongnegative correlation between the two outcome variables (see poster below). Yet they remained different enough to be tested as separate construal. There are substantial moderate to strong positive correlations among the predictors.

Two regression analyses were conducted for aim 1 with 2 outcome (working together: R2=0.117, p=.022; internal rupture: R2=0.086, p=.106). Working together was significantly predicted by disruptive languages (β=-0.209, p=.018) and tech question (β=0.016, p=.021). As the perceived disruption in languages increases by 1 point out of 6 points, the average score for working together decreases by 0.209 point out 7-points. As the number of tech question increase by 1, the average score for working together increases by 0.016 point. The internal rupture was significantly predicted by disruptive languages (β=0.219, p=.040). As the perceived disruption in languages increases by 1 point, the rupture score increases by 0.219 out of 7 point.

Another two regression analyses included the additional moderation terms for aim 2 (working together: R2=0.177, p=.021; internal rupture: R2=0.116, p=.233). Working together was predicted by intent no match (β=0.033, p=.034) and the interaction between intent mismatch and VP race (β=0.078, p=.010). As the number intent no match increases by 1, the average score for working together increases by 0.033 point. Compared to the Black Noah, one point increase in intent mismatch further increases the working together average by 0.078 point when the clinician interviewed the White Noah.

Conclusion:

Overall, the working together subscale, or the strength of perceived alliance, was significantly predicted by technical problems with a medium effect. The aim 1 analyses revealed that instead of measuring objectively the number of disruptions, measuring the perceived level of disruption, specifically disruption in overall languages, might be a better measure to access therapeutic alliance. The aim 2 analyses indicated that the objective measures and the subjective measures might account for very similar construal, which might be why the perceived disruption became insignificant. The significant moderating effect of the VP race might partially explain the mechanism impacting how the actual errors were perceived: since the participants were overwhelmingly white, ¼ of participants were in the condition of Caucasian therapist plus a Black patient, which were known to decrease therapeutic alliance (Wintersteen et al., 2005). Other possible mechanisms include racial bias and effort inputted in the conversation, which might explain why intent no match is associated with increase in alliance. The internal rupture was not significantly predicted by technical errors, which might indicate that technical errors does not necessarily lead to negativity or damage in therapeutic alliance but only the decrease of general positive alliance.

However, it is to be emphasized that the realistic impact of technical errors is minimal, provided that no variable accounted for larger than 0.3 point out of 7 points. Therefore, it might be safe to conclude that the amount and type of technical errors of the current technology has a minimal impact on alliance. The technology is thus a feasible product in conducting psychotherapy process related research and clinical training.

Limitation:

By the time of poster submission, the recoding of nonsensical responses was still in progress due to low interrater reliability. So the coding might not be accurate.

References:

Al-Amin, Md., Ali, M. S., Salam, A., Khan, A., Ali, A., Ullah, A., Alam, M. N., & Chowdhury, S. K. (2024). History of generative Artificial Intelligence (AI) chatbots: Past, present, and future development (Version 1). arXiv. https://doi.org/10.48550/ARXIV.2402.05122

Anthony, K. (2003). The use and role of technology in counselling and psychotherapy. In S. Goss & K. Anthony, Technology in Counselling and Psychotherapy (pp. 13–35). Macmillan Education UK. https://doi.org/10.1007/978-0-230-50015-0_2

Dupuy, L., Micoulaud-Franchi, J.-A., Cassoudesalle, H., Ballot, O., Dehail, P., Aouizerate, B., Cuny, E., De Sevin, E., & Philip, P. (2020). Evaluation of a virtual agent to train medical students conducting psychiatric interviews for diagnosing major depressive disorders. Journal of Affective Disorders, 263, 1–8. https://doi.org/10.1016/j.jad.2019.11.117

Fink, M. C., Reitmeier, V., Stadler, M., Siebeck, M., Fischer, F., & Fischer, M. R. (2021). Assessment of Diagnostic Competences With Standardized Patients Versus Virtual Patients: Experimental Study in the Context of History Taking. Journal of Medical Internet Research, 23(3), e21196. https://doi.org/10.2196/21196

Sabharwal, N., & Agrawal, A. (2020). Cognitive Virtual Assistants Using Google Dialogflow: Develop Complex Cognitive Bots Using the Google Dialogflow Platform. Apress. https://doi.org/10.1007/978-1-4842-5741-8

Wintersteen, M. B., Mensinger, J. L., & Diamond, G. S. (2005). Do Gender and Racial Differences Between Patient and Therapist Affect Therapeutic Alliance and Treatment Retention in Adolescents? Professional Psychology: Research and Practice, 36(4), 400–408. https://doi.org/10.1037/0735-7028.36.4.400